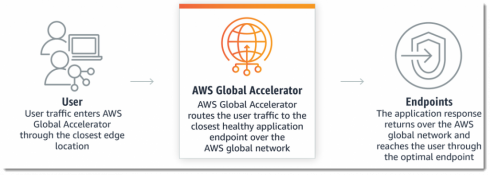

Amazon Web Services, Inc. announced a new way to streamline traffic to applications hosted on its global network with the AWS Global Accelerator service during the company’s annual re:Invent conference this week. With Global Accelerator, traffic between users and hosted applications is optimized to find the shortest route to the AWS edge location that’s the closest to the user and the healthiest, accounting for weights configured by the application provider, Amazon explained.

“Global Accelerator uses Static IP addresses that serve as a fixed entry point to your applications hosted in any number of AWS Regions,” Shaun Ray, senior manager of developer relations with AWS, wrote in a blog post. “These IP addresses are Anycast from AWS edge locations, meaning that these IP addresses are announced from multiple AWS edge locations, enabling traffic to ingress onto the AWS global network as close to your users as possible.”

After provisioning a Global Accelerator, which currently supports applications running on Network Load Balancers, Application Load Balancers and Elastic IP addresses, and routing to the client, Ray explained, the SourceIP of the requesting client provides the identifier for maintaining application state, and the static, globally unique Anycase IP addresses mean that the application won’t need to update clients as it scales.

“Having previously worked in an area where regulation required us to segregate user data by geography and abide by data sovereignty laws, I can attest to the complexity of running global workloads that need infrastructure deployed in multiple countries,” Ray wrote. “AWS Global Accelerator supports both TCP and UDP protocols, health checking of your target endpoints and will route traffic away from unhealthy applications. You can use an Accelerator in one or more AWS regions, providing increased availability and performance for your end users. Low-latency applications typically used by media, financial, and gaming organizations will benefit from Accelerator’s use of the AWS global network and optimizations between users and the edge network.”

New EC2 instance options

During the conference, AWS also announced three new hosting options for customers of its Elastic Cloud Compute platform — A1 instance, P3dn GPU instances and C5n instances.

A1 instances run on a custom processor, the AWS Graviton, the first ARM 64-based cloud computing option. AWS said that the more scalable architecture means that customers will see an up-to 45 percent cost reduction relative to other general-purpose EC2 instances for scale-out workloads.

“Although general purpose processors continue to provide great value for many workloads, new and emerging scale-out workloads like containerized microservices and web tier applications that do not rely on the x86 instruction set can gain additional cost and performance benefits from running on smaller and modern 64-bit Arm processors that work together to share an application’s computational load,” the company wrote in a release.

P3dn GPU instances will launch next week and feature the “most powerful GPU instances in the cloud for machine learning training,” AWS said. The GPU instances handle 4X the bandwidth of the existing P3 instances, and performance upgrades and the ability to distribute machine learning workloads across multiple P3dn instances will significantly reduce the time to train ML models, the company said.

C5n instances are the latest EC2 instance option for compute-intensive operations, and able to handle 100Gbps of network throughput, versus the C5 instances’ 25Gbps, a major boon for distributed and HPC applications.

“This performance increase delivered with C5n instances enables previously network bound applications to scale up or scale out effectively on AWS,” the company wrote. “Customers can also take advantage of higher network performance to accelerate data transfer to and from Amazon Simple Storage Service (Amazon S3), reducing the data ingestion wait time for applications and speeding up delivery of results.”